The thing about Pandora’s box is that once it is opened, it is very hard to close it. Neural networks have become a sort of Pandora’s box; at the very least there is a lot of hope involved. Although conceptually they have been around for decades, it is only recently, as general-purpose GPUs democratized access to massive parallel computers, that they have moved from obscure computer science into the practical realm. Today neural networks are at the center of every complex computer vision application. Their success is due to their unmatched ability to recognize and learn patterns from complex data. The state-of-the-art algorithm for automated image recognition and object tracking is the neural network.

Imagine boarding a plane without a cockpit and flying around the world with no pilot. Imagine if the result of medical tests could be reviewed and diagnosed instantly instead of waiting on a single overworked pathologist. Some automotive companies are promising fully autonomous cars driving on our highways in the next three years. Whether or not there is a scientific basis to such optimism is debatable; it is possible to argue about how close such a future lies, but one thing is certainly clear, whenever that future is, it will have neural networks in it.

The Question of Safety

Given the emerging importance of neural networks in safety critical environments a natural question should be: “Are neural networks safe?”

Before proceeding to reflect on that question it’s important to mention that some people argue the question is actually irrelevant; neural networks are supposedly too complex and over-hyped, and long before we have to deal with certifying them a new algorithm will emerge that will render neural networks obsolete. I would like to point out that line of thought is laughably shortsighted and flawed. First, neural networks are already a part of the safety critical world. Indeed, Tesla makes extensive use of them in their current fleet of “autopilot” enabled cars, and hospital labs are relying more and more on deep learning algorithms to help them diagnose patients. Second, the potential emergence of a better algorithm cannot render neural networks obsolete, just like the invention of cars did not make bicycles disappear. Neural networks have proven to be useful and capable tools and whether we like them or not, they are here to stay. In that case we better spend the time ensuring we understand how they work, and whether they can be used safely.

So back to the safety question, can neural networks be made safe? Thankfully, there are many researchers trying to answer this question. Unfortunately, many of these researchers, and almost everyone in industry is missing half of the picture. If this seems like a provocative statement made for effect, it is not; my work would be much easier if it were.

When evaluating a system for a safety critical environment we need to consider two aspects:

- The expression of a system.

- The execution of a system.

Expression

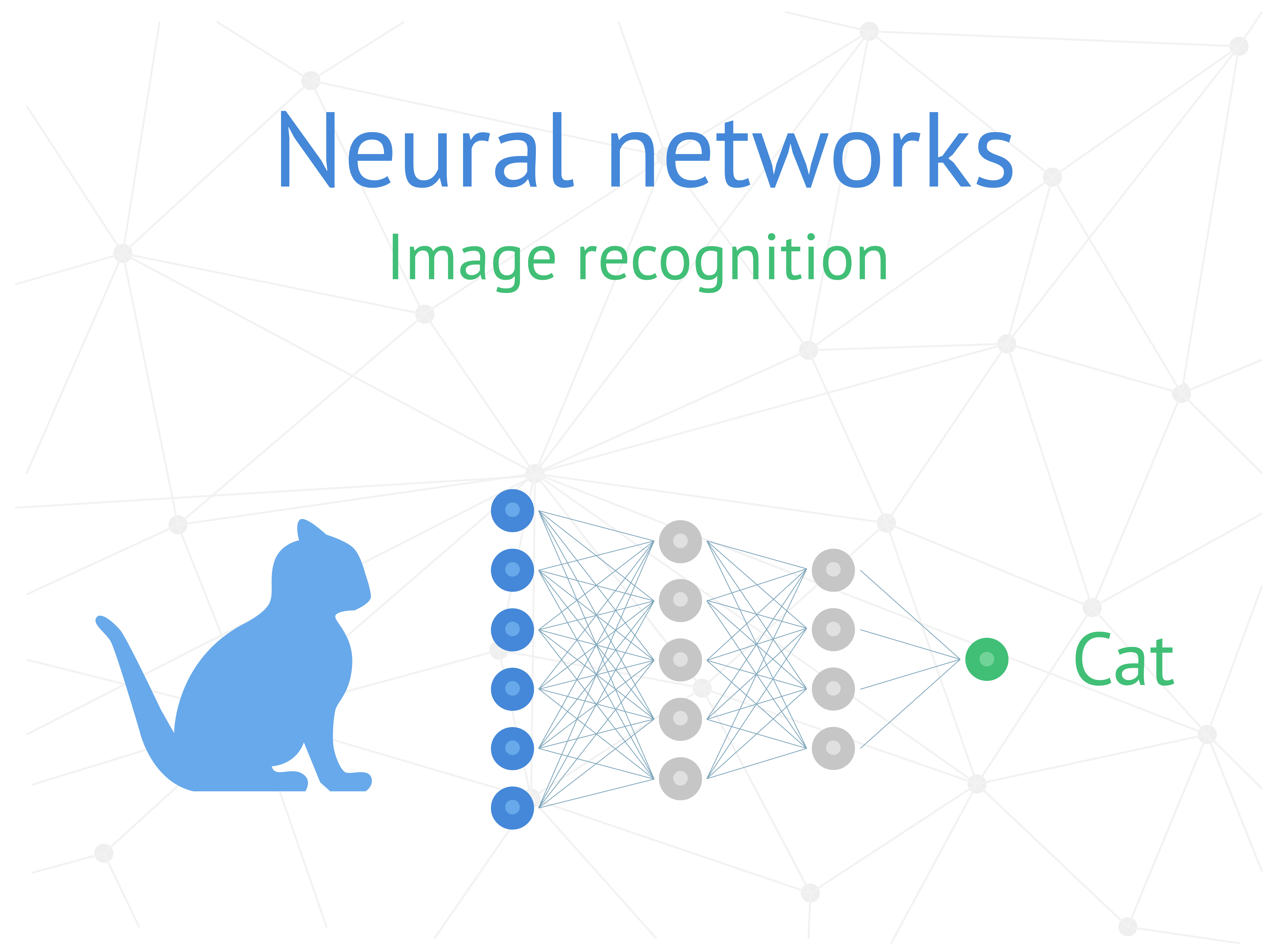

In the case of a neural network, the expression is its output. When presented with an image of a cat, the network should classify the image as a cat. But no system is perfect and sometimes neural networks misfire. Instead of a cat, the network may classify the image as a watermelon. Even neural networks trained to high degree of accuracy (97%>) may make silly mistakes such as these. And while classifying a picture of a cat as a watermelon is simply a minor comical gaffe, mistaking a lamppost for a person could have a much worse consequence.

Understanding why a mistake happens is problematic with neural networks. Traditional rule-based algorithms make it possible to trace the path from an input to an output and understand which decisions caused a given result. Neural networks are different. They learn the rules progressively based on thousands of training examples; it is not possible to walk back from the answer to the input to see what caused the network to make a mistake. A specific result is not driven by a single input, or rule; it’s driven by all the previous inputs. This is the expression problem, and it is the area that is grabbing everyone’s attention today. It is important that we understand how a neural network misfires to be able to quantify its limitations and gain an understanding of what conditions could cause it to fail. Without this level of understanding it is not possible to tell how safe a neural network is.

The good news is that there are many researchers trying to understand how a neural network arrives at a given prediction. A big area of research today is using simulations to at least expose the landscape of inputs that can cause the system to fail. There are those who propose that simulation is all that is necessary to certify a system as safe. After all, if we can run billions of simulations and show that a system is orders of magnitude more reliable than its human counterpart, then why do we need to do anything else?

Execution

This leads us to the second aspect of a neural network that must be proven safe: the execution. It is important to point out that showing that a neural network’s expression is reliable does not address whether the execution of the neural network is deterministic or safe. Simulations may prove useful in increasing confidence in the reliability of the neural network’s expression, but it says very little about how reliable the execution is under real-life conditions.

Safety critical guidelines typically require that software execute deterministically in space and time. Safety critical systems are managed by Real Time Operating Systems where executing processes are carefully choreographed through executing time slices, and so a system integrator needs to be able to calculate the worst case execution time of a piece of code to ensure that it can execute in a given amount of time. Similarly, system resources such as memory are carefully carved out and split among the different running processes. Safety critical software needs to be able to allocate system resources predictably so that the worst-case allocation size is known, and the system will not suddenly run out of memory at runtime.

The execution problem can only be solved by building Artificial Intelligence (AI) systems on top of certifiable software stacks. Running simulations or any other attempt at increasing confidence in a network’s prediction helps solve half of the problem; it helps to demonstrate the output of the network is reliable and should be trusted. But running system simulations by generating distributions of inputs does not reveal any information about how multiple processes of differing priority levels can share common resources in a safety critical environment. It does not give any insight into what happens when the CPU is overwhelmed by requests from a different executing thread. The naïve answer to this is to simulate ad nauseum and add these scenarios to our list of use cases to simulate. This is naïve because on the surface it seems to make sense. We can add scenarios to our simulations and increase our confidence that the system is safe, but worse than naïve, it is flawed. It is flawed because it assumes that execution scenarios could really be simulated. As already stated, safety critical systems consist of multiple processes competing for shared resources in real time where it is crucial to have a detailed layout of each process’ resource usage and execution requirements. These real-life interactions are very difficult to simulate properly but most importantly, thankfully we do not have to. When it comes to execution, we know how to certify systems and there are established processes in place which government entities have adopted.

It is important to note that proving the safety aspect of a neural network’s expression is quite different from proving the safety of its execution. To prove that a neural network executes deterministically, we do not have to know how a neural network misfires; we just need to know whether it succeeds or misfires. It always executes predictably, uses the same amount of resources, and executes in a given time envelope. This is important to guarantee the integrity of not just the AI executing process; it is necessary to guarantee the integrity of every other process executing on and sharing the hardware system. To certify a neural network implementation for safety critical systems both the expression and the execution of the neural network must be proven safe.

Now, you may say, well what is the big deal, why am I getting so worked up? If we agree that we have to solve both problems, so what if there is momentary tunnel vision? Why can’t we focus on the expression problem, and once we solve that we can move onto worrying about execution?

Unfortunately, there is an insidious consequence to waiting too long before worrying about execution. As we wait and focus on understanding neural network predictions, millions of lines of code are being written leveraging platforms and libraries which are hopelessly uncertifiable. What will the industry do when the fog is lifted, and we emerge from the haze of the expression problem? How are we going to prove the execution of these libraries and platforms are safe?

Are we really going to certify python? Are we going to try and convince ourselves that we have simulated enough, so that we do not have to worry about the shaky house of cards we are standing on?

While the industry struggles to solve half the problem and ensure that the expression of neural networks can be proven safe, let us begin building our deployment systems on better foundations, and thus solve the other half of the problem by providing an AI platform built from the ground up using safety critical standard Application Programming Interfaces (APIs). CoreAVI is focused on the development of these Safe AI platforms to enable the future of neural network technology.